Here at TechMikeNY, we love Raspberry Pis. And as you know, we’re pretty big on watching out for the environment. So when we found the Pimoroni Enviro + Air Quality sensor kit for Raspberry Pi, we realized we had to try it out. The Enviro+’s sensors can monitor environmental conditions like temperature, humidity, and air quality - and they’re all on one teeny board! And as it turns out, there’s a lot you can do with all that data.

If you want to level up skills around containerization and remote access/management (or just want another excuse to set up a Raspberry Pi), this one’s for you. In this guide, we'll walk through the complete process of installing the Enviro+ on your Raspberry Pi and configuring it to visualize sensor data in a user-friendly dashboard.

This guide covers multiple different parts of the process. Aka, it’s a little… long. Feel free to set this up in chunks; stretching during reboots is always a good idea. :-)

══════════════════

It all started in 2020, when a RaspberryPi walked into the TechMikeNY office. That is, a RaspberryPi 3B+. At the time, Pis (among other shiny things like toilet paper) had been going for up to 3x their piece. So when resident Pi-head Stanley discovered it hiding in a box under a bunch of wires, he knew he couldn’t just let it sit there. 4 years and a fair share of Instructables tutorials later, this little box took on a life of its own. Of course, Pi tastes way better when it’s shared, so consider this guide a slice on us!

The Pi in question. It even has its own case.

Setup Checklist

☐ A Raspberry Pi model capable of internet connection

☐ An SD Card. TechMikeNY and Raspberry Pi recommend flashing Raspberry Pi OS Lite (64-bit) to a Micro SD card of at least 16GB.

☐ Some kind of screen (and a cable to link said screen to your Raspberry Pi)

☐ Pimoroni’s Enviro for Raspberry Pi – Enviro + Air Quality (Disclaimer: not sponsored. We found ours at MicroCenter.)

|

NOTE: A good chunk of the process for setting up this Pi kit will be done through some pretty straightforward command line. This is nice because without a full GUI, the things you’ll have running are lighter and more efficient, meaning that most of the CPU will be dedicated to the Enviro+ Sensor HAT. 🎩

|

Getting Your Pi Ready

-

Attach the Enviro board to your Pi.

It should look a little something like this.

-

Install Raspberry Pi OS.

Download Raspberry Pi Imager here: Raspberry Pi Imager -

Flash Raspberry Pi OS.

Open up the Raspberry Pi Imager if it has not automatically started.

From the initial screen, indicate your Pi model & operating system, and select the SD card you would like to flash the OS to.

You will be asked if you want to customize your OS. This step is necessary to enable SSH and set up a log-in, so hit [Edit Settings] to continue. -

Apply OS Customisation Settings

Under the “General” tab, configure the following OS customisation settings:

- Set your hostname. This is what you’ll refer to when you’re trying to log in and setting up later containers. The default is raspberrypi, but you can call it whatever you want to call it, especially if you have a bunch of other Pis.

- Set your username and password. This will be your master admin password (so do what you need to to remember it).

- (Optional) Configure wireless LAN. You’ll only need to do this if you’re planning on connecting to your Pi via WiFi; if you’re planning on connecting via an Ethernet cable, you can leave this blank.

- Set locale settings. Sometimes these will be synced, but just double check that you’ve got the right time zone going.

After you've done that, switch to the “Services” tab of the customization menu. Enable SSH and hit [Save]. You’ll be asked if you want to apply changes; hit [Yes].

Once the OS is flashed onto the card, insert the card back into your Pi and power on the Pi.

-

SSH into Raspberry Pi

Open up a terminal on your main machine. The first startup will take 2-3 minutes, so give it a second to think.Run CMD as administrator (or Terminal on macOS/Linux).

SSH into your Pi using the username and hostname you configured in OS settings.

ssh "username"@"hostname"

(Example: If you left both names as defaults, the command will look likessh pi@raspberrypi.)Enter the password when prompted.

-

Update Raspberry Pi OS

Run the following commands to check for updates, apply them, and reboot the system:sudo apt update sudo apt upgrade sudo shutdown -r nowSay yes to any permissions as needed.

Importing the Python Library (and Testing It Out)

Pimoroni provides the Enviro+ Python library to interact with the Enviro+ board and its sensors, complete with example scripts. This allows you to read data from the board and its various components like the displays and sensors.

For the Enviro+ board's onboard displays and sensors to function properly, you’ll need to install both the Enviro+ Python library and the Python imaging library.

Pimoroni also has a primer to getting started with Enviro Plus, in case you want to follow along: Getting Started with Enviro+

-

Install Enviro+ Python library

Run these commands to install the library:sudo apt install git git clone https://github.com/pimoroni/enviroplus-python cd enviroplus-python ./install.sh sudo shutdown -r now -

Install Python imaging library

Install the Python imaging library:sudo apt-get install python3-pil -

Test example scripts to get acquainted with the sensors

Now comes the fun part! Run these commands to activate the virtual environment and test out Pimoroni’s example scripts:source ~/.virtualenvs/pimoroni/bin/activate cd enviroplus-python/examples ls python "example.py"

If you would like to see all of the examples in one place, use the aptly-namedpython all-in-one.pyand tap on the light sensor to cycle through them.Once you’re done playing around with what the sensors can do, use ctrl + C to stop. When you want to exit the virtual environment, run the

deactivatecommand.

You’ve reached Stretch Break #1! Take a breather, then dive back in when you’re ready.

Configure New Docker Container Stack

So you’ve got some sensors, and you can read the data they’re collecting. Nice. But what if you want to see all of that information in pretty graphs? Well, there’s an app for that. 😉

This next part involves setting up a message broker to broadcast Enviro+ sensor data to a linked database. In order to view the data from other devices while being resource-efficient (and learning something cool), we’re going to set up a RaspPi IoT server using IoTstack.

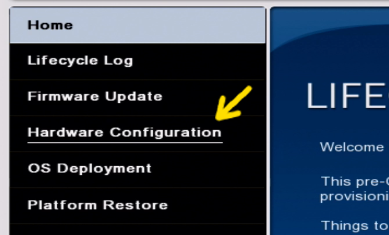

IOTstack allows you to use your Pi as the central hub for connecting various IoT devices and running different services. In our case, it's going to fit together a little like this:

Visual from the Learn Embedded Systems tutorial: Easy Raspberry Pi IoT Server

With IOTstack, you can spin up containers without having to manually write out a docker-compose file yourself. In that sense, it’s kinda like an app store where you can pull stuff off the shelf, and it can save you a good amount of time and money. That combined with its friendly presentation makes it a pretty great introduction to containerization.

NOTE: Prior to configuring the new docker container stack, make sure that you return to root. One easy way to do that is just to reboot and SSH back in.

-

Install IOTStack

Download IOTStack:

curl -fsSL https://raw.githubusercontent.com/SensorsIot/IOTstack/master/install.sh | bashThen reboot once the download is complete:

sudo shutdown -r nowOnce the system restarts, SSH back into Raspberry Pi (same as Step 3). Then, navigate into the IOTStack folder and run the menu script:

cd IOTstack ./menu.sh

Doing so should bring you directly to the main menu.Navigation Note:

- ↑ and ↓ to move between options.

- [Enter] to continue.

-

Configure and Build IOTstack

Time to select the apps that you’re going to create containers for. Select “Build Stack” and press [Enter] to enter the IOTstack Build Menu.

Select each app you want to add and press [Space] to include it in your stack.

You’re going to be selecting the following apps:- Grafana (analytics and interactive visualization)

- InfluxDB (time series database)

- Mosquitto (message broker)

- Node-RED (programming tool)

- Portainer-CE (GUI to see docker containers you have live without having to SSH in)

Hit [Enter] to build the docker-compose.yml file.Node-RED Troubleshooting: If the Node-RED option has a yellow warning sign near it, you’ll need to manually build its .yml file. To do so, hit the right arrow to navigate to NodeRed Options. Choose “Select & overwrite addons list” from the list; there, the first 6 add-ons should be automatically selected. Hit [Enter] to build and save the add-ons list.

You can then start all of these containers with the “Start Stack” command. If you want to check that everything is running, use the command:

docker-compose psAfter you’ve installed IoTstack, you can confirm what the assigned IP address is by entering

ifconfig

TechMike Tip: If you don’t feel like SSH-ing in, you can log into Portainer by enteringhttp://your raspberrypi ip address:9000into any browser. From there, you can create an admin account to view the current containers’ status. -

Create InfluxDB database

Exit the IOTstack menu and return to your regular terminal. You’ll need to create a database file in which Pi will be able to log all of the measurements as EnviroPlus is running. Enter the influx Docker container:

docker exec -it influxdb influxCreate the file:

CREATE DATABASE sensor_dataThen exit the layer to get back into your main session:

quit -

Start Mosquitto

Mosquitto is a message broker, which we’ll use to broadcast the readings from Pi’s sensors into the InfluxDB database. To set up Mosquitto:

Open a virtual environment:

source ~/.virtualenvs/pimoroni/bin/activateand run the following python script:

cd enviroplus-python/examples/ python mqtt-all.pyTurning Off the Glow: If you have your Enviro kit running in your room and you don’t want a blinding light while you’re sleeping (or want to save a little bit of energy), you can turn off the LCD display by modifying mqtt-all.py. To do so, hit Ctrl+C to stop the Python Script, then enter “

nano mqtt-all.py” to open the text editor.

This is the script that should be shown. After the line “disp.display(img)”, add the line “disp.set_backlight(0)”.

Stretch Break #2! This one’s preventative, TBH. Drink some tea, feed your cat, water your plants, say goodnight to your kids (etc) before you settle back in. -

Create Node-RED flow

In order to actually get the data from MQTT to InfluxDB, you will need to subscribe to the broadcast and log the sensor readings to the newly created database. The easiest way to set this flow up is via Node-RED, a visual programming language. We learned a lot from Lambiase Light Industries - if you want more details, here’s the lovely tutorial they put together.

Navigate to your web browser and log into the Node-RED container:

http://[Insert your RaspberryPi IP address]:1880The menu on the left-hand side has categories for different kinds of nodes. To put together your flow, select the nodes the flow consists of:

- Network: mqtt in

- Parser: json

- Common: debug 1

- Function: change (x7, for each sensor output)

- Storage: influx db out (x7, for each sensor output)

Link them together:

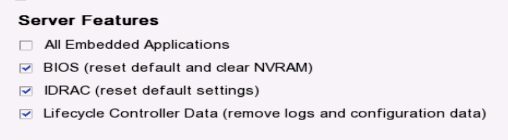

You’re looking to end up with a diagram that looks like this:

(Note: The labels in the diagram above reflect changes made during set-up, which is covered in the next step.)

-

Configure Node-RED nodes

Now, to actually set it up. Double click on the node blocks to configure their properties.

6-A. Starting from the top: the network node (mqtt in).

Here, you’ll need to change the Topic and the Output. Set the Topic to "enviroplus" and the Output to auto-detect (string or buffer).

Next, you're going to modify the rules for each of the change and storage pairs to transmit each of the 7 sensors. (At this point, the change nodes are labeled "set msg payload” and the storage nodes are labeled "influxdb”.)

6-B. Change nodes:

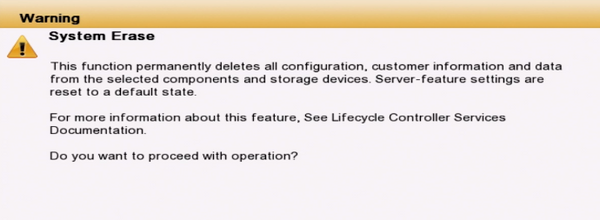

Now to set up the change nodes. You will be setting all of these to the path of the sensor you want that particular node to pick up. To do so, go into the change node properties, then copy and paste each sensor’s path from the debugger, as shown below:

6-C. Influxdb nodes:

Label the influxdb nodes to match their corresponding change nodes.

Make sure the pairs match.

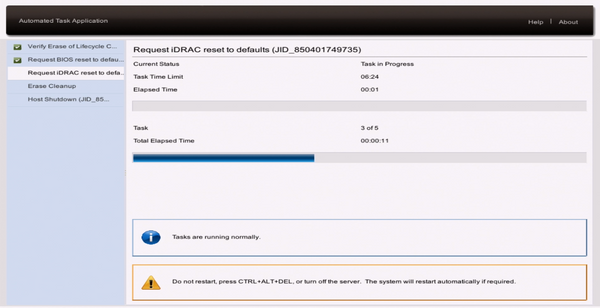

Then, go into the Server properties within the influxdb nodes (hit the pencil button to edit).

Set the host to your RaspberryPi IP address, and set the database to “sensor_data”.

⚠️ Save yourself some time: before you deploy this flow, make sure that your nodes are configured correctly, especially for the MQTT output. If you put in the wrong message type, it’s not going to push correctly and it might get FUBAR.

When you’ve double checked that everything matches up, hit the [Deploy] button and celebrate!

Setting up Grafana

Home stretch! Grafana is where you will be able to see all of your data visualized.

-

Make a Grafana account

In a new browser tab, open Grafana, and enter

http://[Insert your RaspberryPi IP address]:3000Set up an account. (The default user / pass is admin.)

-

Add your datasource (influxdb)

From the home dashboard, click on "Add your first data source".

You'll see a list of data source types. Select "InfluxDB".

You'll be taken to a settings page. There, you can name the InfluxDB.

For the URL, enterhttp://[Insert your RaspberryPi IP address]:8086

Configure the "Details" section as follows:

Database: sensor_data

HTTP Method: GET

Min Interval: 2sHit [Save & Test]. If all is well, you'll see a message saying: "datasource is working. 7 measurements found".

-

Create dashboard elements for corresponding sensors

To create your dashboard, navigate back to the Home screen and select "Create your first dashboard". Click "Add Visualization", and select the InfluxDB that you configured in the previous step.

Once you've got that going, you can set up a panel for each individual sensor. (You can also modify how often you are receiving the measurements by setting the reset interval and absolute time range.)

Temperature = measured in celsius (°C)

Humidity = measured in percentage (%)

Gas (Oxidising, Reducing, NH3) = measured in kiloohms (kΩ)

Nitrogen dioxide (oxidizing), carbon monoxide (reducing), and ammonia (NH3)

Light = measured in lux (lux)

The sensors are not perfectly precise (e.g., “temperature” will be impacted by whatever cooling mechanism you have for your Pi), but you’ll be able to see trends over time and get insights on your physical environment.

And there you have it. See? That wasn't so bad after all!

Ready for more?

Then we’ve got some pretty good news; the skills you just used are super transferable. For example, now that you have experience deploying containerized applications and services, you could spin up a full home media server setup complete with Plex and all the works. Or put your new remote monitoring abilities to use visualizing hardware metrics and resource usage across your entire server rack. Now that you’ve essentially set up a localized weather station, the possibilities are endless.

And don't worry about having to start from scratch —TechMikeNY's refurbished Dell Optiplex machines and other servers can come pre-configured with Ubuntu, so you can hit the ground running installing Docker containers, dashboards, and more. So basically, you’ll be unstoppable.

What's your next big project going to be? We'd love to hear about it! Share your latest homelab adventures with us in the comments below or send them our way via info@techmikeny.com

Related:

Related: